Data-Driven Scene Understanding from 3D Models

|

|

|

|

|

|

|

|

|

|

People

Jason Lin

Martial Hebert

Overview

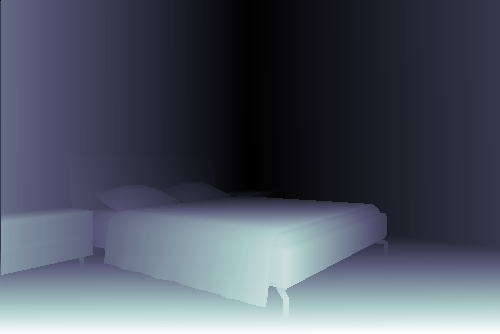

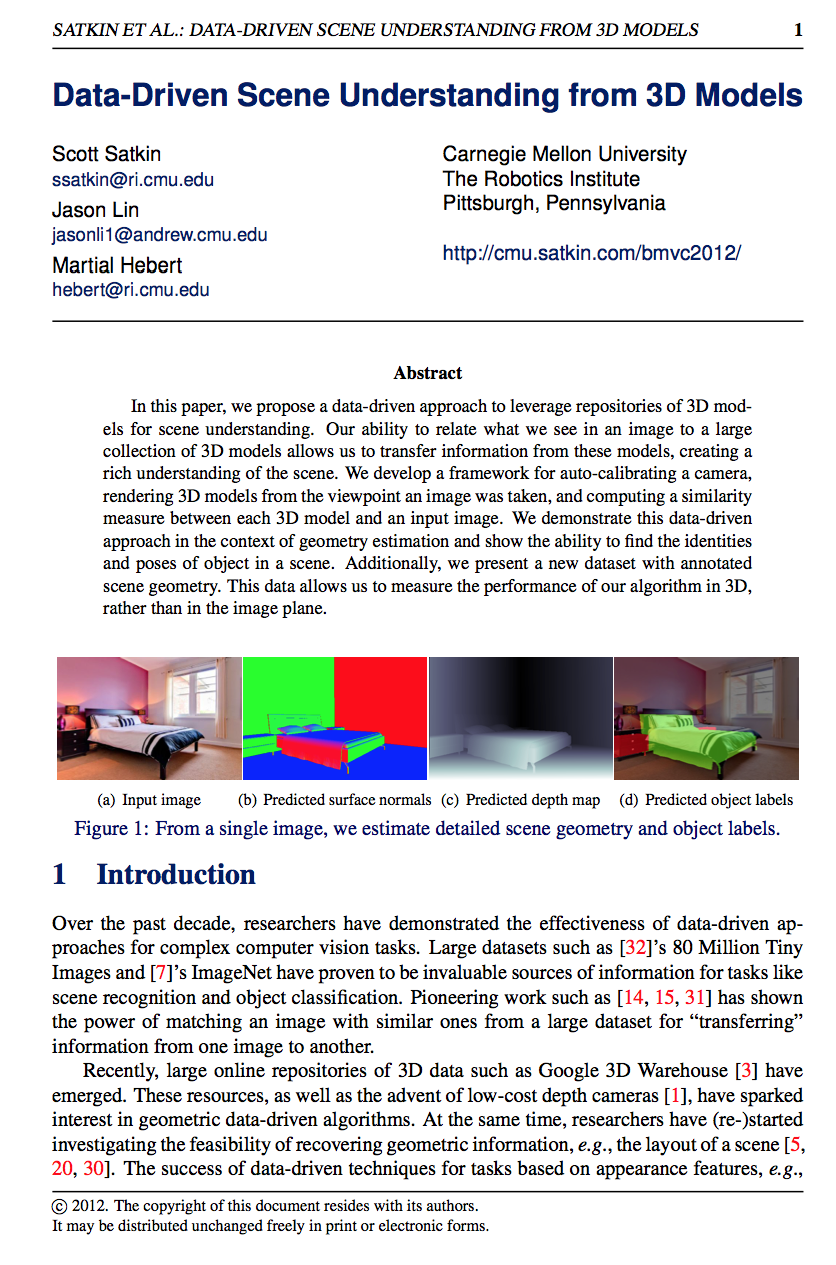

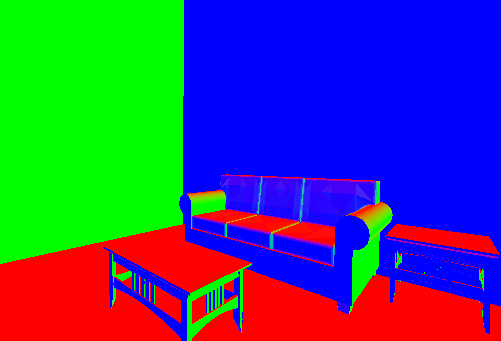

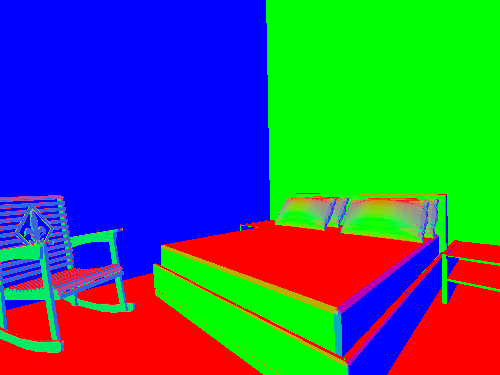

In this paper, we propose a data-driven approach to leverage repositories of 3D models for scene understanding. Our ability to relate what we see in an image to a large collection of 3D models allows us to transfer information from these models, creating a rich understanding of the scene. We develop a framework for auto-calibrating a camera, rendering 3D models from the viewpoint an image was taken, and computing a similarity measure between each 3D model and an input image. We demonstrate this data-driven approach in the context of geometry estimation and show the ability to find the identities and poses of object in a scene. Additionally, we present a new dataset with annotated scene geometry. This data allows us to measure the performance of our algorithm in 3D, rather than in the image plane.

Paper

|

Data-Driven Scene Understanding from 3D Models,

S. Satkin, J. Lin and M. Hebert, Proceedings of the 23rd British Machine Vision Conference (BMVC), September 2012. |

Presentation

|

Videolectures.net Scott Satkin, September 2012. |

CMU 3D-Annotated Scene Database

| This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Unported License. |

ASCII files - txt.tar.gz (158MB)

Raw SketchUp files - skp.tar.gz (580MB)

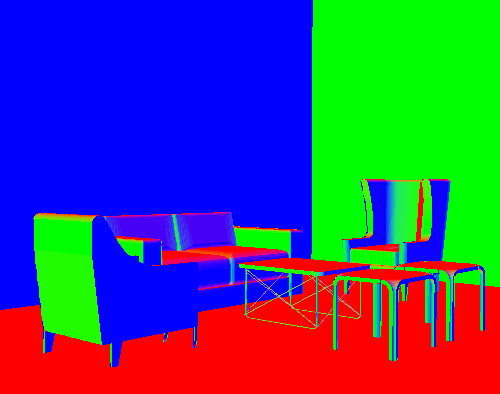

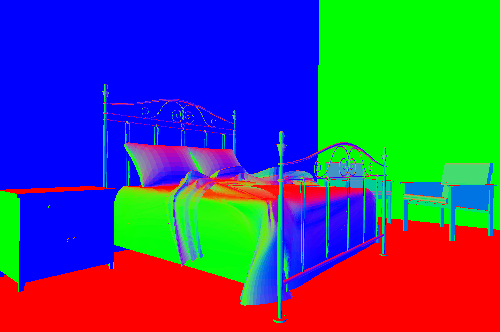

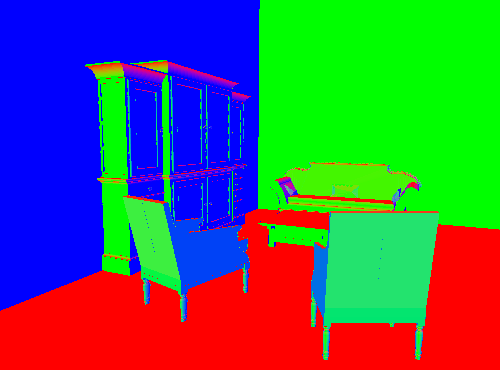

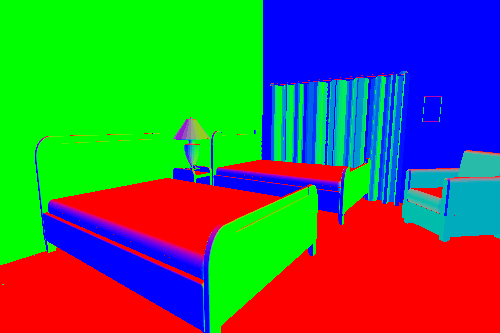

Surface normal & object label renderings - renderings.tar.gz (125MB)

Dataset description- README.txt

Please contact the authors with any additional requests for data.

Funding

This material is based upon work partially supported by the Office of Naval Research under MURI Grant N000141010934.